In Part 1 of this two-part mini-series, we noted that there are trillions of dollars’ worth of equipment in factories around the globe. Also, that if any of these devices — pumps, motors, generators, fans, etc. — stop working unexpectedly, they can disrupt the operations of the factory. In some cases, a seemingly insignificant unit can bring an entire production line to a grinding halt.

We also considered various maintenance strategies, including reactive (run it until it fails and then fix it), pre-emptive (fix it before it even thinks about failing), and predictive (use artificial intelligence (AI) and machine learning (ML) to monitor the machine’s health, looking for anomalies or trends, and guiding the maintenance team to address potential problems before they become real problems).

At the end of Part 1, we were left feasting our eyes on the physical portion of my first AI/ML-based system as shown below. In this column, we will walk through the process of pulling everything together.

The physical portion of my first AI/ML-based system (Image source: Max Maxfield)

Just to remind ourselves as to the overall plan, my son (Joseph the Common Sense Challenged) can occasionally be goaded into using the vacuum cleaner. Unfortunately, he rarely remembers to check to see if it’s already full before he starts. As a result, he oftentimes expends a lot of effort making a lot of noise without achieving anything useful with regard to actually picking up any dust or dirt.

It was for this reason that I decided it would be useful to create an AI/ML system that can determine when the vacuum cleaner’s bag is full and send a notification to my smartphone informing me as to this situation.

As a starting point, the software I used to create my AI/ML system was NanoEdge AI Studio, which is developed by those clever guys and gals at Cartesiam (you can access a free trial version of this little rascal from their Download page).

The microprocessor development system I opted to use was the Arduino Nano 33 IoT. I selected this for a number of reasons, not least that it is capable of running all of the experiments described in the TinyML book authored by Pete Warden and Daniel Situnayake. There’s also the fact that it has Bluetooth and Wi-Fi capability, which will be useful when it comes to sending emails and/or text messages. Perhaps the most important reason, however, is the fact that I’m not exactly a software hero, but I am reasonably comfortable with the Arduino’s integrated development environment (IDE).

This is probably a good time to introduce Louis Gobin from Cartesiam. Due to the fact that I’m less than gifted on the software development front, Louis was kind enough to spend several hours guiding me through the process. In the picture below, we see Louis looking out of the middle monitor of the troika of screens on my desk. I fear his smile is in response to one of my helpful suggestions (at least he didn’t laugh out loud).

Louis Gobin from Cartesiam trying not to laugh (or cry) at one of my helpful suggestions (Image source: Max Maxfield)

When it came to the sensor portion of my AI/ML system, my knee-jerk reaction was to employ something like a 3-axis accelerometer to monitor the vibration patterns exhibited by the vacuum cleaner, and to use changes in these patterns to determine when the bag was full.

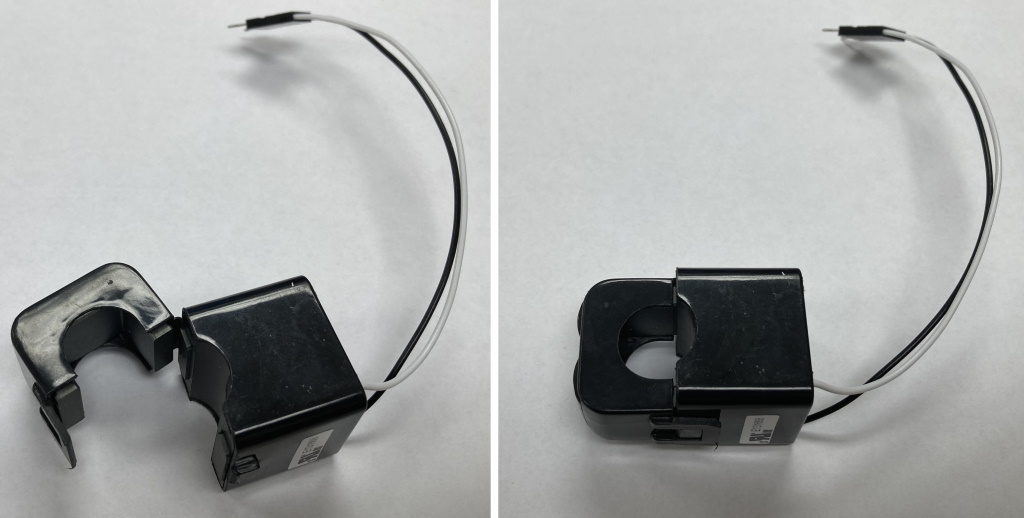

NanoEdge AI Studio supports a wide range of sensor types, including 3-axis accelerometers, but Louis suggested that it might be easier for me to use a simple current sensor. In the same way that an injury to one part of the body can cause reflected pain in another area, so too can a current sensor be used to detect all sorts of weird and wonderful aspects of a machine’s operation. Thus, we decided to use a small, low-cost CR3111-3000 split-core current transformer from CR Magnetics.

CR3111-3000 split-core current transformer from CR Magnetics (Image source: Max Maxfield)

The great thing about this little scamp is that you can open it up, wrap it around the current-carrying wire, and clip it closed again, thereby relieving you from the task of slicing through any wires. Speaking of which, the vacuum cleaner in question is my trusty Dirt Devil as shown below.

Meet my trusty Dirt Devil (Image source: Max Maxfield)

I don’t know about you, but things seem to multiply in our house. For example, we have at least three vacuum cleaners to my knowledge (there could be more lurking in rarely used cupboards and closets). Ever since… let’s call it “the incident”… I’ve been forbidden by my wife (Gina the Gorgeous) to use her favored device. This is what prompted me to splash the cash on my Dirt Devil, which has proved useful on many an occasion, not least that no one complains if I bring it into the office to take part in my experiments.

Still and all, as they say, I was loath to start messing around with the Dirt Devil’s power cord, so I invested in a 1-foot extension cable. To be honest, I didn’t even know these things were available until I started looking. If you’d asked me a couple of weeks’ ago, I would have said that a 1-foot extension cable was as close to being useless an item as I could think of. Now, by comparison, I think it’s a wonderful creation and I doff my cap to whomever came up with the idea (I assume it was someone whose partner didn’t want them performing any more experiments on their household appliances).

One-foot extension cable with 1.5” of insulation stripped away (Image source: Max Maxfield)

The first thing I did was to strip about 1.5” of the outer insulation from the middle of the extension. The second thing I did was to say “Hmmm, which color represents what?” If you were born in the USA, which is where I currently hang my hat, you probably already know that, over here, black is the live wire, white is the neutral wire, and green is the ground (or earth) wire. The problem is that when I grew up in England, black was neutral, red was live, and green was ground (I understand that things have changed since I left, with the current UK standard employing brown for live, blue for neutral, and yellow-green stripes for ground). And, of course, you take your chances in other parts of the world.

Now for the weasel words (remember that eagles may soar, but weasels rarely get sucked into jet engines), which are that you should never mess around with electric wiring unless you are qualified to do so or you are working with someone who is.

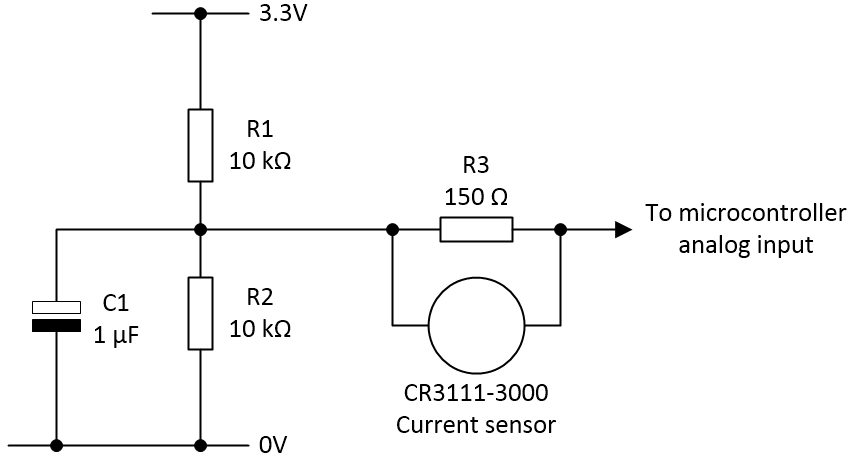

The next step was to build the current sensing circuit that would allow me to use one of the analog inputs on my Arduino Nano 33 IoT to read the value from the CR3111-3000 split-core current transformer. This circuit is shown below.

The current sensing circuit (Image source: Max Maxfield)

By means of the CR3111-3000, the current driving the machine is transformed into a much smaller equivalent with a 1000:1 ratio.

I’m powering my Arduino Nano 33 IoT with a 5 V supply, but its inputs and outputs operate at 3.3 V and its on-board regulator provides a 3.3 V output, so that’s what I’m using to power this circuit.

Resistors R1 and R2 act as a potential divider, forming a “virtual ground” with a value of 1.65 V. Capacitor C1 forms part of an RC noise filter. And resistor R3 is connected across the CR3111-3000’s secondary (output) coil to perform the role of a burden resistor, which produces an output voltage that is based on the current flowing through the resistor.

Once this circuit was in place, we were ready to rock and roll, so I dispatched the butler to fetch my rock-and-roll trousers and — while we were waiting for his return — I launched NanoEdge AI studio.

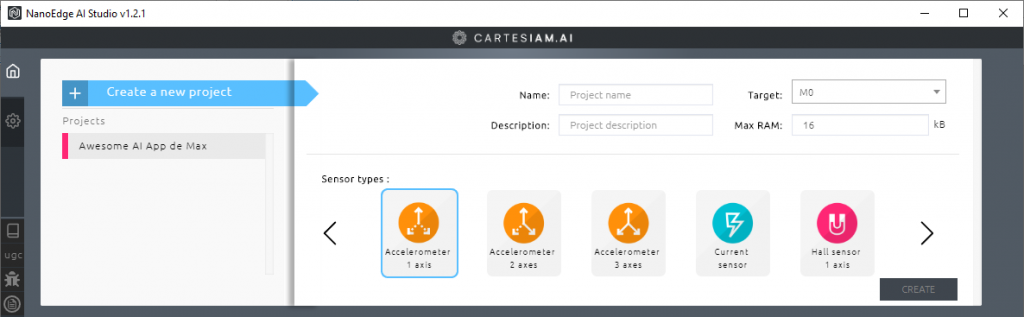

The upper portion of the NanoEdge AI Studio launch screen (Image source: Max Maxfield)

The first thing you are invited to do is to either create a new project or open an existing project. In the above image, we see my existing project, which I modestly called “Awesome AI App de Max” (as always, I pride myself on my humility).

Let’s suppose we were creating this project anew. In this case, we would give it a name (like “My Amazing AI Project”), add a short description, select a target processor (the Arm Cortex-M0+ in the case of the Arduino Nano 33 IoT), and specify the maximum amount of RAM we wish to use. Louis suggested that we commence with 6KB of RAM to see how that went (this gives the system something to aim at — you can always change it later).

One more task is to select the number and type of sensors you are using. Note that you don’t have to select specific part numbers — just the general type of device to give the system a clue what you are trying to do. In our case, we selected a single current sensor, but you can have multiple sensors of different types depending on your target application.

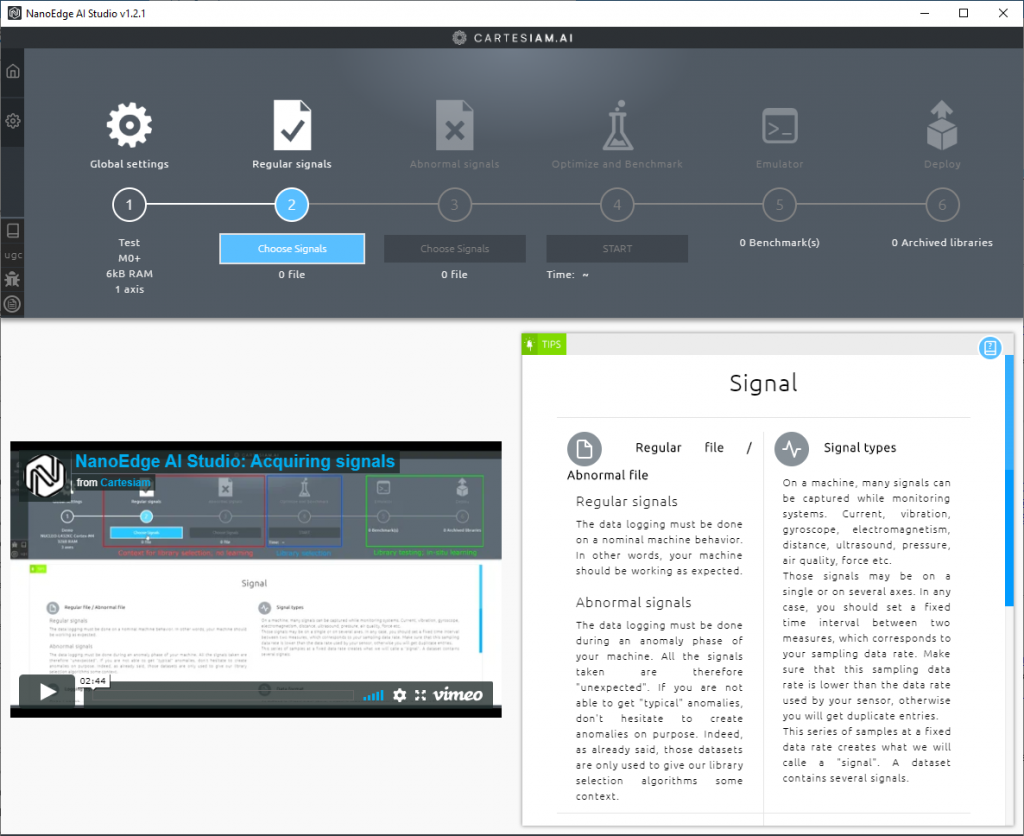

Once you’ve set things up, you click the “Create” button to launch your new project. This results in a new view that guides us through the process. As shown below, we’ve already established our global settings, so now we are poised to collect our regular (good) signals.

In the previous image, I showed only the upper portion of the NanoEdge AI Studio screen, but there’s much more as we see in the image below. In this case, there’s a video in the bottom left that discusses the process of acquiring signals. There’s also a discussion of the different signal types in the bottom right.

Poised to start collecting regular signals (Image source: Max Maxfield)

I’m not going to walk us through every screen; suffice it to say that when you click the “Choose Signals” button, you are presented with a number of options, including whether you wish to load your signal values live over the USB interface or from a file.

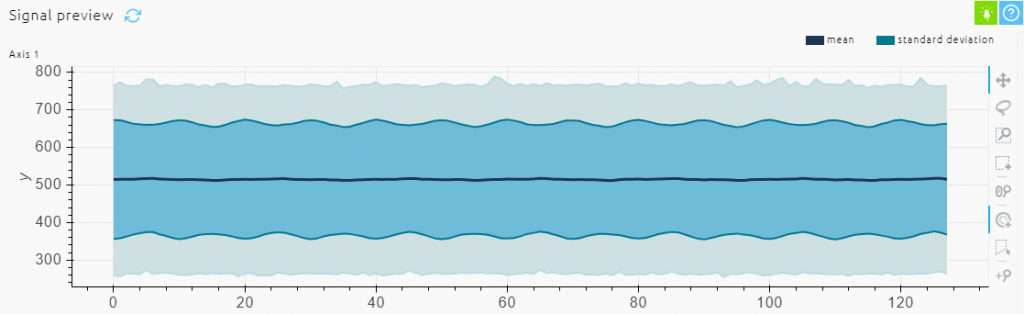

In the case of the regular (good) signals, we opted to load the values live over the USB interface. Louis whipped up a simple 26-line Arduino sketch (program) that loops around reading the current data and writing it to the serial port (which ends up as the USB with the Arduino). (I’ll tell you how to get a copy of this sketch later). So, I powered up my Dirt Devil and started annoying the people in the neighboring offices while recording the data coming from the Arduino. When processed by NanoEdge AI studio, a visualization of this regular data was presented as shown below.

Visualization of the regular data (Image source: Max Maxfield)

Next, we moved to the “Abnormal Signals” step in the process. It was at this point that we ran into a bit of a conundrum because, prior to starting, I’d emptied the Dirt Devil’s canister into the trash container that was conveniently located in the canteen area. The problem was that I now needed to collect some abnormal (bad) signals.

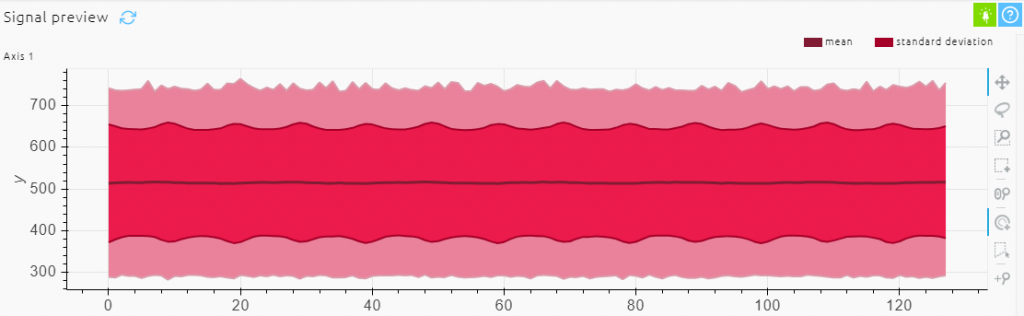

It struck me that people in the building might think I was a little strange (well, stranger than they already think) if they were to find me in the canteen rooting around in the trash grabbing handfuls of fluff and dust and stuffing them into my vacuum cleaner. As an alternative, my first solution was to cut out a circle of paper and use it to block the air filter.

In this case, we opted to load the signal data from a file. What this meant in practice was that we used the same sketch as before, but this time I opened the Arduino’s Serial Monitor window on my PC screen and let the signal data from the Arduino stream to that window. Once I had enough data, I copied it over to a standard *.txt file. The advantage of this file-based approach is that you can edit the data before loading it into NanoEdge AI Studio. This allows you to do things like removing anomalous data from the beginning and/or end of the run, if you so desire. When you are ready, you load this abnormal data file into the system. When processed by NanoEdge AI studio, a visualization of this pseudo-abnormal data was presented as shown below.

Visualization of the pseudo abnormal data (Image source: Max Maxfield)

If the truth be told, to my eye this didn’t look all that different to the regular data (apart from the color, of course), but we proceeded with the process and proved that NanoEdge AI Studio could tell the difference.

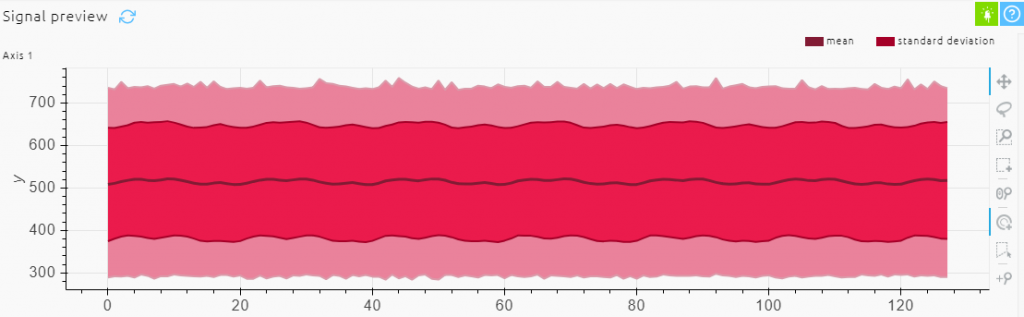

The problem was that I didn’t feel good about using pseudo abnormal data. My parents brought me up to be the sort of fellow who would only ever use real abnormal data. To this end, Louis and I took a short break while I accompanied my Dirt Devil on a stroll around the building, vacuuming the corridors and offering to clean strangers’ offices until my dust canister was full-to-bursting, at which point we repeated the process with real abnormal data as shown below.

Visualization of the real abnormal data (Image source: Max Maxfield)

I think you will agree that all three of the above visualizations look somewhat similar to the eye, which makes what follows all the more impressive as far as I’m concerned.

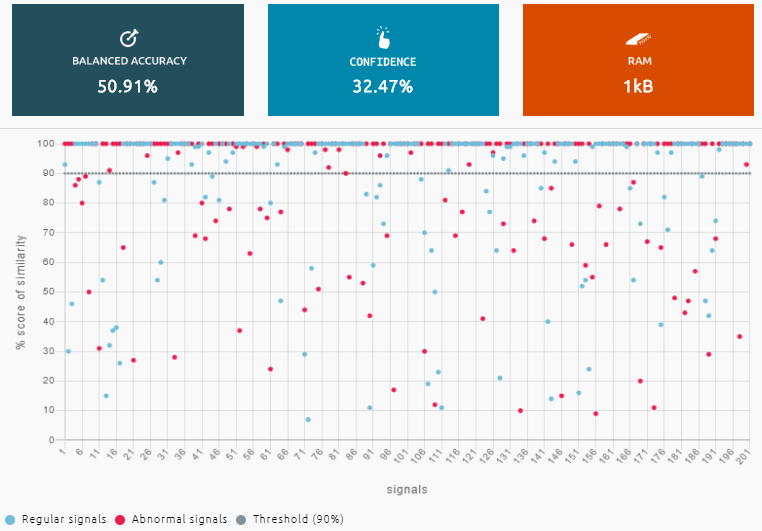

Now, armed with our regular signals and our real abnormal signals, we handed things over to NanoEdge AI Studio for optimization and benchmarking. The idea here is that the system experiments with different algorithms, selecting the optimal combination of algorithmic building blocks out of 500 million combinations and permutations, on a mission to achieve the best accuracy with the highest confidence whilst using the least amount of memory.

It has to be admitted that things didn’t look too good at the beginning as shown below. To be fair, however, this screenshot was taken only 10 seconds into the run.

A few seconds into experimenting with algorithms (Image source: Max Maxfield)

Note that this is only one of the displays provided by NanoEdge AI Studio. I actually found it quite fascinating to watch the system trying different approaches and then fine-tuning each scenario, only moving on to a new model if it provided superior results to the current best solution.

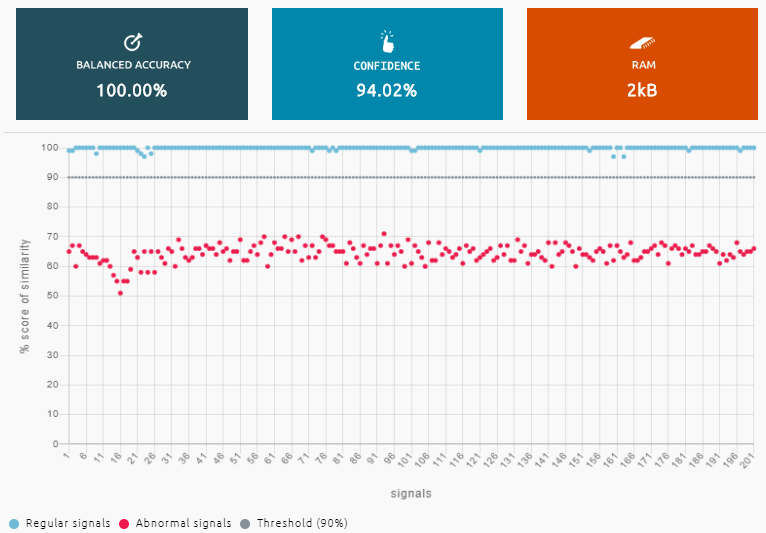

After a while, the system had arrived at a solution that offered 100% accuracy and a 94% confidence level. Most amazing to me was that the entire library (or model as I tend to think of it) consumed only 2KB of memory. Color me impressed!

We decided to call a halt once we had achieved 100% accuracy with 94% confidence using only 2KB of RAM (Image source: Max Maxfield)

I’m going to skip over the nitty-gritty details of the emulation stage and generating the user version of the library. Suffice it to say that, once we had our library, Louis created an 83-line program that included this library, trained the library, and then used the library to monitor the vacuum cleaner in action.

I must admit that, until I went through the entire process, I hadn’t fully appreciated some of the subtleties and nuances. For example, why did we need to train the library? After all, we already had the regular and abnormal data we’d used to create it in the first place.

Well, the thing is, once you’ve created a library, you can think of it as being in a generic state, as it were. Do you remember when I said that we have (at least) three vacuum cleaners at our house? The library (model) we just created can be used with all of these machines, but each will have a different operational signal profile, so it’s best to train the system with the machine with which it is to be used.

The example I usually think of in an industrial scenario is a pump intended to move liquids around. You might purchase two identical units and install them in different locations in the factory. One could be mounted on a concrete bed, while the other is mounted on a suspended floor; one may find itself in a cold and dry location, while the other ends up in a hot and humid environment; one may be connected to the outside world via short lengths of plastic piping, while the other is connected using long lengths of metal tube; and one may be pumping pure grain alcohol while the other is pumping olive oil. From this, it’s easy to see that the two devices could well exhibit different operational profiles, so it makes sense to train the AI/ML model on the system with which it will be working.

To cut a long story short, we trained my model using my Dirt Devil, and then we tested it out with an empty dust container and a full container. For this first pass, we used a slow-blinking LED to indicate a happy condition, while a fast-blinking LED was used to report an anomalous (“bag is full”) condition. I have to say that I was really impressed as to how well this all worked.

If you want to try this out for yourself, Louis has very kindly taken what we did and documented the entire step-by-step process. You can access all of this, including the Arduino sketches, via the NEAI Arduino Current page on Github (where NEAI stands for “NanoEdge AI,” of course).

After you have this up-and-running, Louis has also posted an NEAI Arduino Current WiFi page that extends the original project by using the Arduino Nano 33 IoT’s Wi-Fi capability to send notifications to your smartphone when the vacuum cleaner’s bag/container is full (this is what I hope to be doing later today).

Last but not least, Louis told me to tell you that if you have different projects in mind or any questions, then you should feel free to contact the company at [email protected] or himself directly at [email protected]. (I’m assuming that when Louis says “any questions,” he would prefer queries related to NanoEdge AI Studio; in the case of questions relating to “Life, the Universe, and Everything,” I’m your man.) Speaking of which, as always, I welcome your comments, questions, and suggestions.