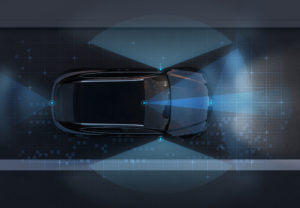

Lidar (short for light detection and ranging) is an increasingly common technology – it’s embedded in iPhones and Teslas – that uses lasers to measure distances, allowing accurate remote mapping of small and large spaces. In satellites, for instance, lidar is used to measure elevation; in self-driving cars, it’s used to map the road, obstacles, and so on. Now, researchers from MIT are leveraging machine learning to allow more detailed processing of lidar data in real-time.

The problem at hand: in short, lidar is powerful and data processing is slow. A typical lidar sensor can produce millions of depth data points per second, which quickly overwhelms data processing capabilities built into, say, cars – so the systems on those cars collapse the 3D lidar data into 2D data, losing much of the detail in translation.

The MIT researchers, by way of contrast, are using an end-to-end machine learning framework, feeding in low-resolution GPS maps and the raw 3D lidar data. In order to handle the heavy computational load of deep learning from massive lidar data quickly enough to enable real-time driving, the MIT researchers designed novel components for the deep learning model to better leverage GPUs. “We’ve optimized our solution from both algorithm and system perspectives, achieving a cumulative speedup of roughly 9x compared to existing 3D lidar approaches,” said Zhijian Liu, a PhD student at MIT and co-lead author of the paper, in an interview with MIT’s Adam Conner-Simons.

Early testing data shows that the MIT system reduced the need for human takeover and withstood major sensor failures. Much of this is due to the system’s bet-hedging: it estimates its certainty on any given prediction, then weights each prediction accordingly. This helps protect the system against misleading inputs, such as messy lidar data during weather events. “By fusing the control predictions according to the model’s uncertainty, the system can adapt to unexpected events,” said Daniela Rus, a professor of electrical engineering and computer science at MIT and one of the paper’s senior authors.

The researchers are hopeful that their work will help pave the way to future self-driving systems that require less manual intervention – by both programmers and drivers – to deliver satisfactory results. “We’ve taken the benefits of a mapless driving approach and combined it with end-to-end machine learning so that we don’t need expert programmers to tune the system by hand,” said Alexander Amini, the other co-lead author of the paper (and another PhD student at MIT).

Next, the researchers are working to scale the system through weather event modeling and additional vehicles on the road.

Share this:

Source: https://www.datanami.com/2021/06/07/mit-researchers-leverage-machine-learning-for-better-lidar/