Parsel, an AI framework created by a group of researchers at Stanford, uses large language model (LLM) reasoning to transform hierarchical functions descriptions in natural language into an implementation in code. Additionally, the researchers maintain, Parsel can be used for robot planning and theorem proving.

As InfoQ already covered, a number of LLMs are already available to transform a textual description of a program into code, such as OpenAI Codex, the open source PolyCoder, GitHub Copilot, and others GPT-3 based models.

Parsel attempts to go a step beyond what code LLMs already provide by mirroring a pattern used by human programmers to generate complex programs, taht is “decomposing an abstract plan until it can be solved automatically”, the researchers explains in their paper.

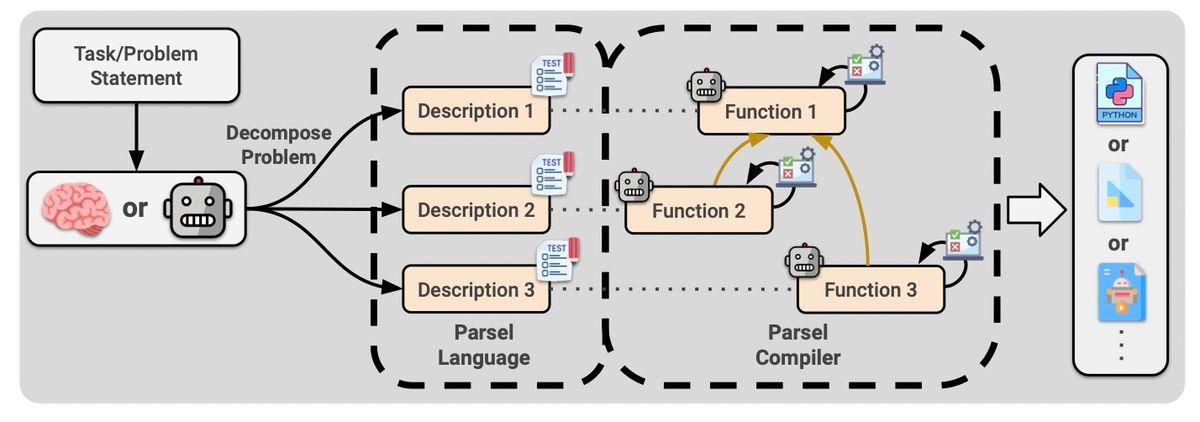

The general idea behind Parsel is the following: a human or LLM uses the Parsel language to describe how a task could be decomposed in sub-tasks, called strongly-connected components (SCC). The Parsel compiler then uses a code LLM and a constraint solver to implement each SCC. Finally, the implemented SCCs are composed back into a program that carries through the original task.

We show that LLMs can generate Parsel with only a few examples, and their solutions outperform prior work on competition-level problems in the APPS dataset, including AlphaCode and both (compositional). And, both versions of Codex.

The Parsel compiler accepts a natural language description of the functions to implement and a set of constraints that must be satisfied, for example a collection of unit tests. Given this input, a code LLM is prompted to generate implementations for each function and combine them until it finds one that satisfies the constraints.

This is an example of how you could describe a part of Conway’s Game of Life, including a constraint indicating the function should return an integer when called with input ([[1, 0], [0, 1]], 0, 0):

count_living_neighbors(grid, i, j): count the number of living neighbors of the cell at the index (i, j)

type_fn_output(fn, args): returns the type of the output of a function called with args

count_living_neighbors, ([[1, 0], [0, 1]], 0, 0) -> int

The simplest case for Parsel is one where all functions have constraints and none of them is recursive.

In this situation, […] without any cycles in the call graph, we can start by implementing the leaf functions first, then their parents, etc. until the program is implemented.

Recursion and functions with no constraints make things harder. In this case, the complexity of recombining SCCs grows exponentially with the size of the largest SCC. To make recombination tractable, Parsel considers the sets of functions whose definitions depend on one another and make the assumption that statefulness across them does not interfere with dependent asserts to be able to find one set that satisfies all constraints.

A key step for Parsel to provide correct programs is decomposition. Indeed, if a function description is too complex, say the researchers, the code LLM used to translate that into code could not be able to do it correctly. In such cases, the solution would be further decomposing the complex functions into simpler ones.

Related to that, the quality of the code produced by a code LLM may in general vary significantly with the output language, with languages under-represented in training data performing worse.

While Parsel has proved to be able to implement robust algorithmic reasoning, there are a number of questions still open and many opportunities to extend and improve the framework, say the researchers.

About the Author

Sergio De Simone

Show moreShow less

Source: https://news.google.com/__i/rss/rd/articles/CBMiOmh0dHBzOi8vd3d3LmluZm9xLmNvbS9uZXdzLzIwMjMvMDIvcGFyc2VsLWNvZGUtZ2VuZXJhdGlvbi_SAQA?oc=5